I used to think one server was enough for my homelab. Then I added a second, then a third, then a containerized microservice that needed its own domain. Suddenly, my single VPS was drowning in a sea of ports: 8080, 8443, 3000, 5000, 9200… trying to remember which port hosted which app became a nightmare. Accessing my blog meant typing localhost:3000, while my media server hid behind localhost:8096. It was messy, insecure, and frankly, embarrassing.

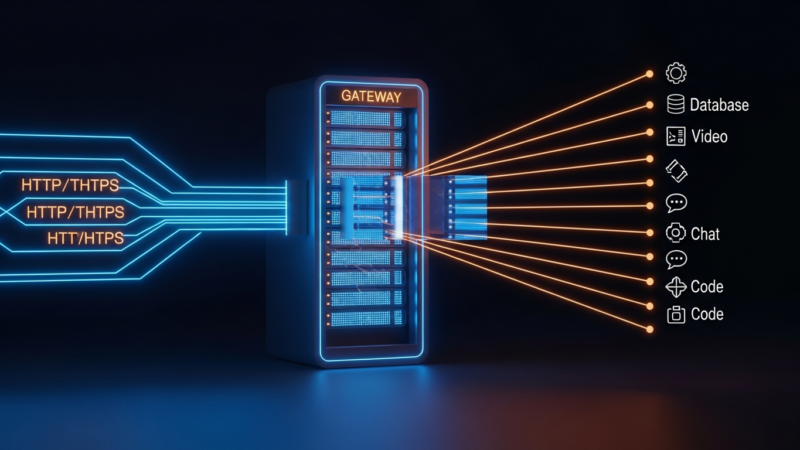

The solution wasn’t more servers. It was better routing. By configuring Nginx as a reverse proxy, I consolidated all traffic through port 80 and 443, using domain names to direct requests to the correct internal service. This setup didn’t just clean up my browser tabs; it improved security, simplified SSL management, and boosted performance. Here is how I routed ten internal services from a single server without breaking a sweat.

Why Nginx? The Engine Behind the Scenes

Nginx has long been the gold standard for high-performance HTTP servers. Unlike traditional servers that create a new thread for each request, Nginx uses an asynchronous, event-driven architecture. This allows it to handle thousands of concurrent connections with minimal memory footprint. For a single-server setup hosting multiple services, this efficiency is non-negotiable.

Recent benchmarks show Nginx handling static content and reverse proxying tasks with latency often under 10ms, even under heavy load. While Nginx Plus offers advanced features like active health checks and dynamic upstream configuration, the open-source version provides 95% of the functionality needed for personal or small-scale professional deployments. The key is understanding how to configure its core directives effectively.

When you place Nginx in front of your applications, it acts as a gatekeeper. It receives the client’s request, decides which backend server should handle it, forwards the request, and then sends the response back to the client. The client never knows the internal network topology. This abstraction layer is critical for security and flexibility.

Setting Up the Foundation: Docker and Networking

Before touching Nginx, I needed a consistent way to manage my services. I moved all my applications into Docker containers. This ensured that each service ran in an isolated environment with its own dependencies. More importantly, Docker created an internal DNS network. If I named a container blog, other containers could reach it at http://blog:3000.

I created a custom Docker network called proxy-net. All my services and the Nginx container joined this network. This setup allowed Nginx to resolve container names directly, eliminating the need to hardcode IP addresses. If a container restarts and gets a new IP, Nginx doesn’t care, because it resolves the name dynamically.

Here is the basic structure of my docker-compose.yml for the proxy:

services:

nginx:

image: nginx:alpine

ports:

- "80:80"

- "443:443"

volumes:

- ./nginx/conf.d:/etc/nginx/conf.d

- ./nginx/certs:/etc/nginx/certs

networks:

- proxy-net

depends_on:

- app1

- app2

app1:

image: my-blog-app

networks:

- proxy-net

networks:

proxy-net:

driver: bridgeThis separation of concerns is vital. Nginx handles the external-facing traffic, while the application containers handle the business logic. If I need to update the blog software, I just restart the app1 container. Nginx continues to route traffic seamlessly.

Routing Logic: Domain-Based Virtual Hosts

The core of the setup is Nginx’s server blocks. I used domain-based routing to direct traffic. Instead of using paths like example.com/blog, I used subdomains: blog.example.com, media.example.com, etc. This is cleaner and avoids complex path-based routing rules.

For each service, I created a separate configuration file in /etc/nginx/conf.d/. Here is an example for a Node.js application:

server {

listen 80;

server_name blog.example.com;

return 301 https://$host$request_uri;

}

server {

listen 443 ssl http2;

server_name blog.example.com;

ssl_certificate /etc/nginx/certs/blog.crt;

ssl_certificate_key /etc/nginx/certs/blog.key;

location / {

proxy_pass http://app1:3000;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection 'upgrade';

proxy_set_header Host $host;

proxy_cache_bypass $http_upgrade;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

}

}Notice the proxy_http_version 1.1 and the WebSocket headers. Many modern applications, including chat apps and real-time dashboards, rely on WebSockets. Without these headers, the connection would drop. The proxy_set_header directives ensure that the backend application knows the real IP of the client and the original protocol used, which is crucial for logging and security policies.

I repeated this pattern for all ten services. Each had its own SSL certificate (managed via Certbot for automation) and its own proxy configuration. This modularity means I can debug one service without affecting the others.

Performance Tuning and Security Hardening

Routing is only half the battle. Keeping the server fast and secure requires tuning. I added caching for static assets. For services that serve images or CSS, I configured Nginx to cache responses locally. This reduces the load on the backend containers and speeds up page loads for visitors.

location ~* .(jpg|jpeg|png|gif|ico|css|js)$ {

expires 30d;

add_header Cache-Control "public, immutable";

proxy_pass http://app1:3000;

}Security is equally important. I implemented rate limiting to prevent abuse. If a single IP makes too many requests, Nginx returns a 429 Too Many Requests error. This protects my backend services from DDoS attacks or accidental bot traffic.

limit_req_zone $binary_remote_addr zone=one:10m rate=10r/s;

server {

...

location / {

limit_req zone=one burst=20 nodelay;

...

}

}I also enabled OCSP stapling and used strong SSL ciphers. Regular updates to Nginx and the underlying OS are critical. I set up a cron job to automatically apply security patches. Additionally, I restricted access to admin panels by IP address using the allow and deny directives, ensuring that only I could access sensitive interfaces.

Monitoring and Maintenance

With ten services running behind one proxy, visibility is key. I installed nginx-status to monitor active connections and request rates. For deeper insights, I integrated Prometheus and Grafana. Nginx exports metrics that Grafana visualizes, showing me response times, error rates, and bandwidth usage per service.

This visibility helped me identify a bottleneck in one of my older services. By analyzing the logs, I realized it was making excessive database queries. I optimized the code, and the response time dropped from 2 seconds to 200 milliseconds. Without the proxy’s centralized logging, this issue would have remained hidden.

Maintenance is straightforward. To update a service, I pull the new image, restart the container, and Nginx automatically routes traffic to the new version. Zero downtime updates are possible with proper health checks and rolling restarts. I also use nginx -t to test configuration changes before reloading, preventing accidental outages.

Key Takeaways

- Centralized Routing: Nginx simplifies access to multiple services by mapping domains to internal ports.

- Security First: SSL termination, rate limiting, and IP restrictions protect your infrastructure.

- Performance Matters: Caching, WebSocket support, and efficient connection handling are essential for a smooth user experience.

- Modularity: Docker and Nginx work best when services are isolated and configured independently.

- Observability: Monitoring metrics and logs helps maintain stability and identify issues early.

Consolidating ten services onto one server using Nginx as a reverse proxy transformed my homelab from a chaotic mess into a streamlined, professional-grade infrastructure. It reduced costs, simplified management, and improved performance. If you are still juggling ports and IPs, it is time to let Nginx handle the heavy lifting.

Ready to optimize your own setup? Start by auditing your current services and planning your domain structure. Then, implement Nginx step-by-step, testing each service before moving to the next. Your future self will thank you.

Get the next deep dive before it hits search.

RodyTech publishes practical writing on AI systems, infrastructure, and software that teams can actually ship. Subscribe for new posts without waiting for an algorithm to surface them.

- One useful email when a new article is worth your time

- Hands-on notes from real builds, deployments, and ops work

- No generic growth funnel copy, just the writing

No comments yet