I used to think one server was enough for everything. Then I hit the 10-service mark. Suddenly, my homelab wasn’t a cozy tech playground anymore; it was a tangled mess of port conflicts, SSL certificate nightmares, and a single point of failure that kept me up at night.

If you are running a homelab, a small team environment, or even a microservices architecture, managing direct access to individual ports is a recipe for chaos. You don’t want http://192.168.1.50:8080 floating around in your bookmarks. You want clean URLs, unified security, and seamless routing.

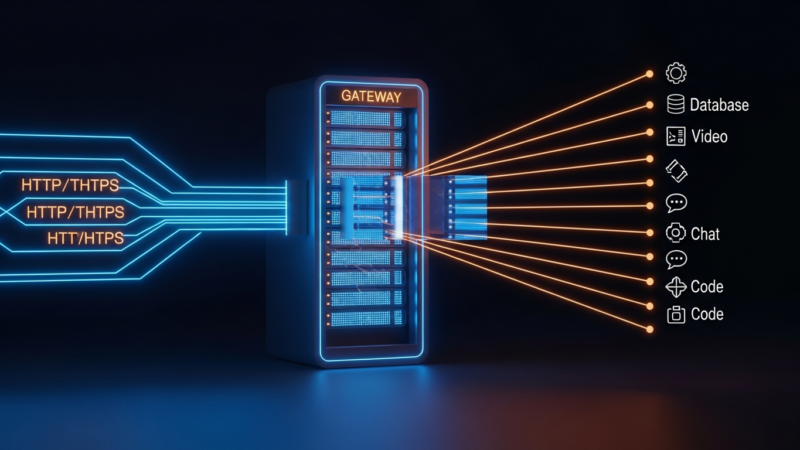

Enter Nginx. Not just as a web server, but as the central nervous system of your infrastructure. Here is how I configured a high-performance Nginx reverse proxy to handle 10 internal services from a single VPS, keeping latency low and security tight.

The Architecture: Why Nginx?

Before diving into the config, let’s talk about why Nginx remains the gold standard for reverse proxying. While tools like Traefik or Caddy offer dynamic discovery, Nginx offers raw, predictable performance. For a single server handling 10 services, the overhead of a complex service mesh is unnecessary bloat.

Nginx excels at handling thousands of concurrent connections with a minimal memory footprint. Its event-driven architecture means it doesn’t spawn a new thread for every request. Instead, it uses a master-worker process model that scales efficiently across CPU cores. When you are routing traffic for services like Home Assistant, Nextcloud, Plex, and various Docker containers, this efficiency translates directly to lower latency and higher throughput.

Furthermore, Nginx’s configuration is declarative and version-controllable. Unlike GUI-based load balancers, your entire routing logic lives in text files. This makes it easy to audit, backup, and replicate across environments. For engineers who value transparency and control, this is non-negotiable.

Core Configuration: The Map Directive Magic

The secret to managing 10+ services without writing 10 separate server blocks lies in the map directive. This feature allows you to create a mapping between a variable (like the hostname) and a backend server group. It keeps your configuration DRY (Don’t Repeat Yourself) and scalable.

Here is the foundational setup. First, define your upstream groups. These are logical names for your backend services.

upstream home_assistant {

server 127.0.0.1:8123;

}

upstream nextcloud {

server 127.0.0.1:8080;

}

upstream plex {

server 127.0.0.1:32400;

}Next, use the map block to determine which upstream to use based on the $host variable. This allows a single server block to handle all incoming HTTP traffic and route it appropriately.

map $host $backend {

default home_assistant;

hass.example.com home_assistant;

cloud.example.com nextcloud;

media.example.com plex;

}Now, your main server block becomes incredibly simple. It listens on port 80 (or 443 if you handle SSL termination here) and proxies to the mapped backend.

server {

listen 80;

server_name _;

location / {

proxy_pass http://$backend;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

}

}This structure means adding an 11th service only requires adding one line to the map block and one upstream definition. No complex regex or conditional logic needed.

Performance Tuning: Squeezing Every Cycle

Routing is only half the battle. Serving the content efficiently is the other. Nginx has several directives that significantly impact performance when proxying to internal services.

First, enable buffering wisely. By default, Nginx buffers responses from the backend. This is good for slow clients but can increase memory usage. For fast internal networks, you might want to disable buffering for specific services that stream large files, like Plex.

location /plex/ {

proxy_pass http://plex;

proxy_buffering off;

proxy_request_buffering off;

}Second, optimize the proxy cache. If you have services that serve static assets or rarely changing data, caching at the Nginx level reduces load on your backend containers. Use the proxy_cache_path directive to define a cache directory and proxy_cache to activate it.

Third, tune the worker connections. In your nginx.conf, ensure worker_processes is set to auto to match your CPU cores. Increase worker_connections to handle high concurrency. For a homelab server, 1024-2048 is usually sufficient, but monitor your logs for too many open files errors.

Security Hardening: The First Line of Defense

Exposing internal services to the internet (or even your local network) requires robust security. Nginx provides several tools to harden your proxy.

SSL/TLS termination is critical. Use Let’s Encrypt with Certbot to manage certificates. Configure Nginx to redirect all HTTP traffic to HTTPS and enforce strong cipher suites.

server {

listen 443 ssl http2;

server_name _;

ssl_certificate /etc/letsencrypt/live/example.com/fullchain.pem;

ssl_certificate_key /etc/letsencrypt/live/example.com/privkey.pem;

ssl_protocols TLSv1.2 TLSv1.3;

ssl_ciphers HIGH:!aNULL:!MD5;

}Implement rate limiting to prevent abuse. Nginx’s limit_req_zone directive allows you to restrict requests per IP address. This is essential for protecting login pages from brute-force attacks.

limit_req_zone $binary_remote_addr zone=login:10m rate=1r/s;

location /login {

limit_req zone=login burst=5 nodelay;

proxy_pass http://backend;

}Additionally, hide Nginx’s version number by setting server_tokens off; in the http block. This prevents attackers from identifying specific vulnerabilities associated with your Nginx version.

Monitoring and Maintenance

A reverse proxy is only as good as its visibility. Install nginx-prometheus-exporter to expose metrics in Prometheus format. This allows you to track request rates, response times, and error counts using Grafana or similar dashboards.

Regularly review your access logs. Look for patterns that indicate misconfigured clients or potential security threats. Use tools like goaccess to generate real-time HTML reports from your logs, giving you insights into which services are most popular and where bottlenecks might occur.

Key Takeaways

- Centralize Routing: Use Nginx’s

mapdirective to manage multiple services with a single, clean configuration. - Optimize for Performance: Tune buffering, caching, and worker connections to match your specific workload.

- Secure by Default: Enforce HTTPS, use strong ciphers, and implement rate limiting to protect your services.

- Monitor Continuously: Use exporters and log analysis to maintain visibility into your proxy’s health.

Managing 10 internal services from one server is not just about convenience; it’s about building a resilient, efficient, and secure infrastructure. Nginx provides the tools to do this effectively, provided you understand its configuration nuances. Start with the basics, iterate on your performance settings, and always prioritize security. Your future self will thank you when the next service goes live.

Get the next deep dive before it hits search.

RodyTech publishes practical writing on AI systems, infrastructure, and software that teams can actually ship. Subscribe for new posts without waiting for an algorithm to surface them.

- One useful email when a new article is worth your time

- Hands-on notes from real builds, deployments, and ops work

- No generic growth funnel copy, just the writing

No comments yet