Artificial Intelligence

Artificial Intelligence

Securing AI Agents: Defending CI/CD from Prompt Injection

Autonomous CI/CD is here, but so is the risk of prompt injection. Learn how to secure your AI agents against the next...

Applied AI systems, agent workflows, model operations, and automation that small teams can ship.

Artificial Intelligence

Artificial Intelligence

Autonomous CI/CD is here, but so is the risk of prompt injection. Learn how to secure your AI agents against the next...

Artificial Intelligence

Artificial Intelligence

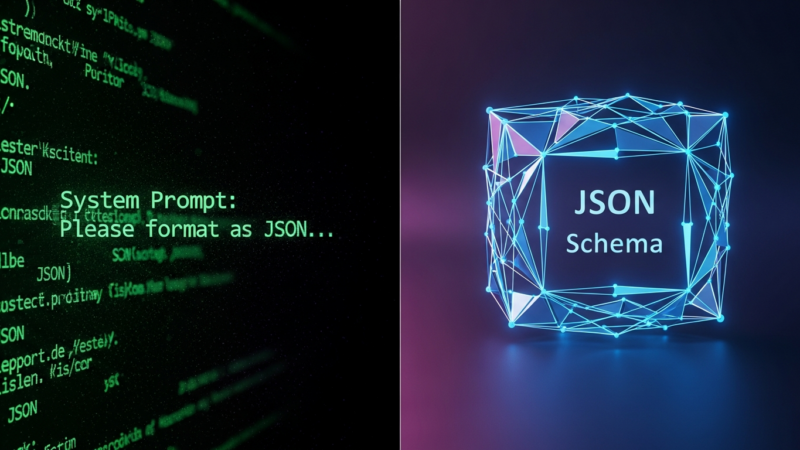

Stop parsing JSON strings. Learn how constrained decoding and Pydantic are replacing prompt engineering with type-safe, reliable Agentic AI.

Artificial Intelligence

Artificial Intelligence

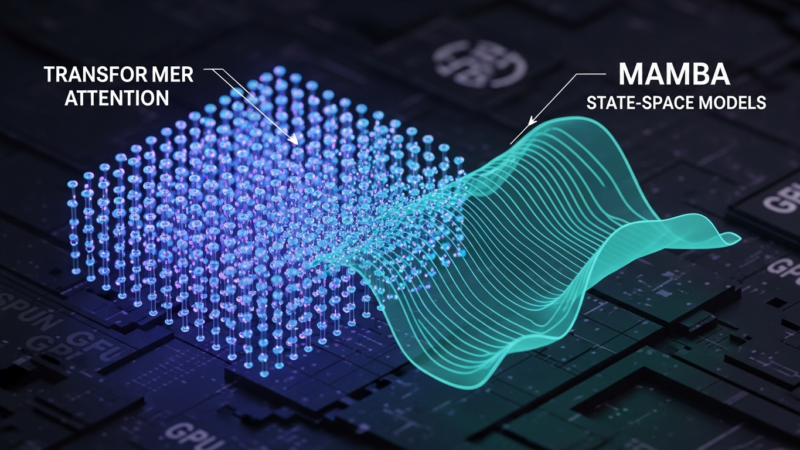

vLLM 0.7.0 breaks the Transformer barrier with native Mamba support and hybrid kernels. Here is your technical deep dive.

Artificial Intelligence

Artificial Intelligence

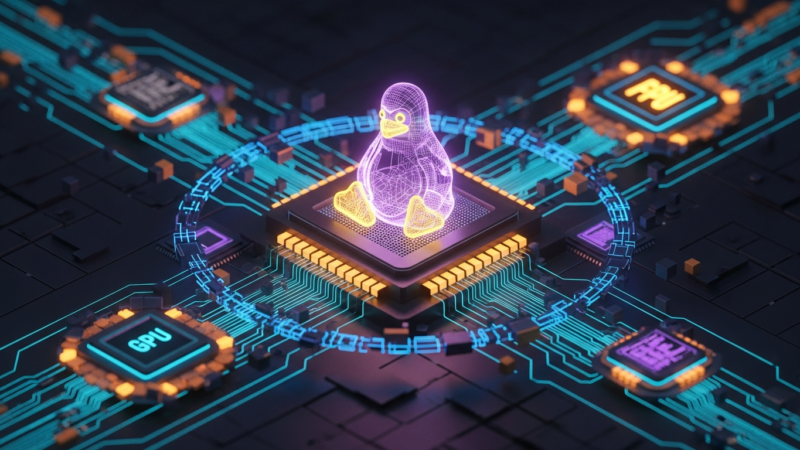

Linux Kernel 6.12 LTS arrives with EEVDF scheduling and heterogenous compute support, optimizing the OS for the next wave of AI hardware.

Artificial Intelligence

Artificial Intelligence

Unlock massive cost savings with vLLM 3.0. Learn to serve 1,000 distinct LLM fine-tunes on a single GPU cluster using Dynamic LoRA...

Artificial Intelligence

Artificial Intelligence

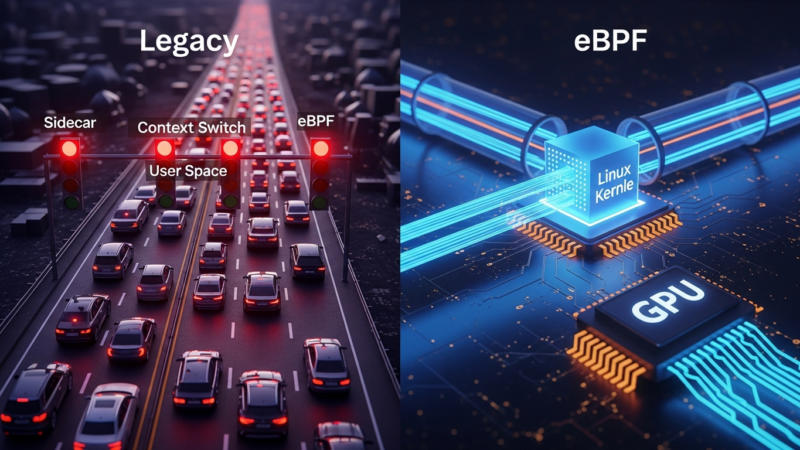

Sidecar proxies cost 20% CPU and kill AI training speed. Here is how eBPF-powered service meshes eliminate the overhead and optimize RDMA.

Artificial Intelligence

Artificial Intelligence

Stop guessing where your LLM latency comes from. Use eBPF to expose the hidden CPU-GPU overhead and optimize inference in real-time.

Artificial Intelligence

Artificial Intelligence

Struggling with multi-hop inference in RAG? Learn how hybrid vector-graph indexing optimizes workflows for complex reasoning.

Artificial Intelligence

Artificial Intelligence

Mamba offers linear complexity, but deploying it requires kernel optimization. Here is how to get high-throughput inference with PyTorch 2.5.

Artificial Intelligence

Artificial Intelligence

WASI-NN 2.0 and WebGPU are enabling high-performance Llama 4 inference directly in the browser, eliminating server latency.