Artificial Intelligence

Artificial Intelligence

Running 70B Llama 4 on 16GB RAM: The 1.58-Bit Breakthrough

Discover how 1.58-bit quantization and BitNet b1.58 architecture enable running massive 70B models like Llama 4 on just 16GB RAM.

Artificial Intelligence

Artificial Intelligence

Discover how 1.58-bit quantization and BitNet b1.58 architecture enable running massive 70B models like Llama 4 on just 16GB RAM.

PyTorch 3.0 changes the game with native State Space Models. Here is your deep dive into linear complexity sequence modeling.

Linux 6.14 introduces the first stable Rust GPU drivers. Explore how the Apple AGX driver and Rust memory safety transform open source AI compute.

Artificial Intelligence

Artificial Intelligence

Move beyond monolithic prompts. Learn the architecture patterns, state management strategies, and fault tolerance required for robust multi-agent AI systems.

Artificial Intelligence

Artificial Intelligence

WasmEdge 2.0 integrates WebGPU for near-native LLM inference in the browser, offering 100x speedups and local-first AI privacy.

Artificial Intelligence

Artificial Intelligence

Apache Kafka 4.0 introduces Native Tiered Storage and Vector Data support, cutting infrastructure costs and accelerating AI pipelines.

Artificial Intelligence

Artificial Intelligence

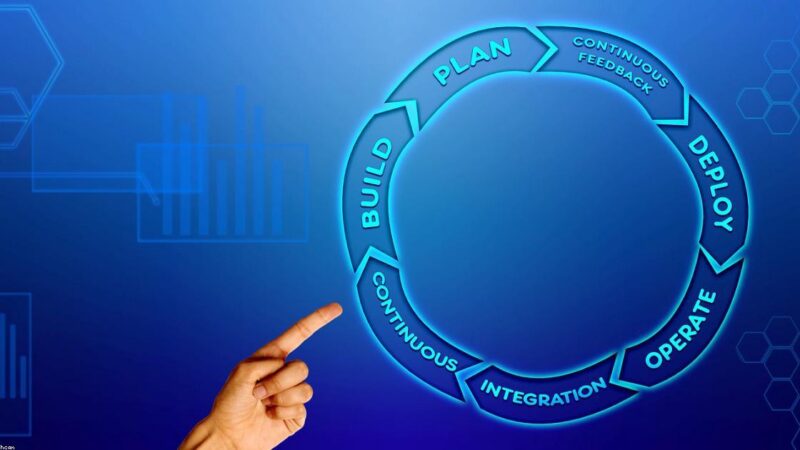

Discover how AI agents and LLM orchestration are transforming engineering workflows, from code generation to DevOps automation.

Artificial Intelligence

Artificial Intelligence

Move beyond chatbots. Learn how agentic workflows, code-first orchestration, and AI automation can help small dev teams scale efficiently in 2024.

Test excerpt

Artificial Intelligence

Artificial Intelligence

CXL 3.0 memory disaggregation bypasses the HBM tax, slashing AI inferencing costs by 60% through GPU memory pooling.